Marketing automation workflows promise efficiency and scale, but without consistent monitoring, even the most sophisticated campaigns can underperform or waste budget. The difference between average and exceptional results often comes down to tracking the right metrics at the right frequency. Weekly performance audits reveal patterns that monthly reviews miss, allowing you to course-correct before minor issues become major revenue drains. Learn more about 15 key workflow metrics.

Teams that implement structured weekly audits consistently see conversion improvements of 35-45% within three months. This happens because frequent monitoring creates accountability, surfaces optimization opportunities faster, and builds institutional knowledge about what drives results in your specific market. The key is knowing which metrics matter most and establishing a sustainable review process that your team will actually follow. Learn more about Slack integration workflows.

This guide breaks down the eight essential metrics that deliver the highest return on your audit time. Each metric connects directly to revenue outcomes and provides actionable signals about workflow health. By focusing your weekly reviews on these specific data points, you’ll spend less time analyzing vanity metrics and more time making decisions that improve performance. Learn more about tagging strategy for personalization.

Email Delivery Rate and List Health Indicators

Your delivery rate represents the percentage of emails that actually reach subscriber inboxes versus those that bounce or get rejected by receiving servers. This fundamental metric determines whether your carefully crafted messages ever get seen. A healthy delivery rate sits above 98%, and anything below 95% signals serious problems with list hygiene or sender reputation that will tank all downstream metrics. Learn more about workflow visualization tools.

Monitor both hard bounces and soft bounces separately during your weekly audit. Hard bounces indicate permanent delivery failures from invalid email addresses, while soft bounces suggest temporary issues like full inboxes or server problems. Your automation platform should automatically suppress hard bounces, but manual review ensures this process works correctly and helps identify patterns like typos in signup forms or data import errors. Learn more about lead scoring models.

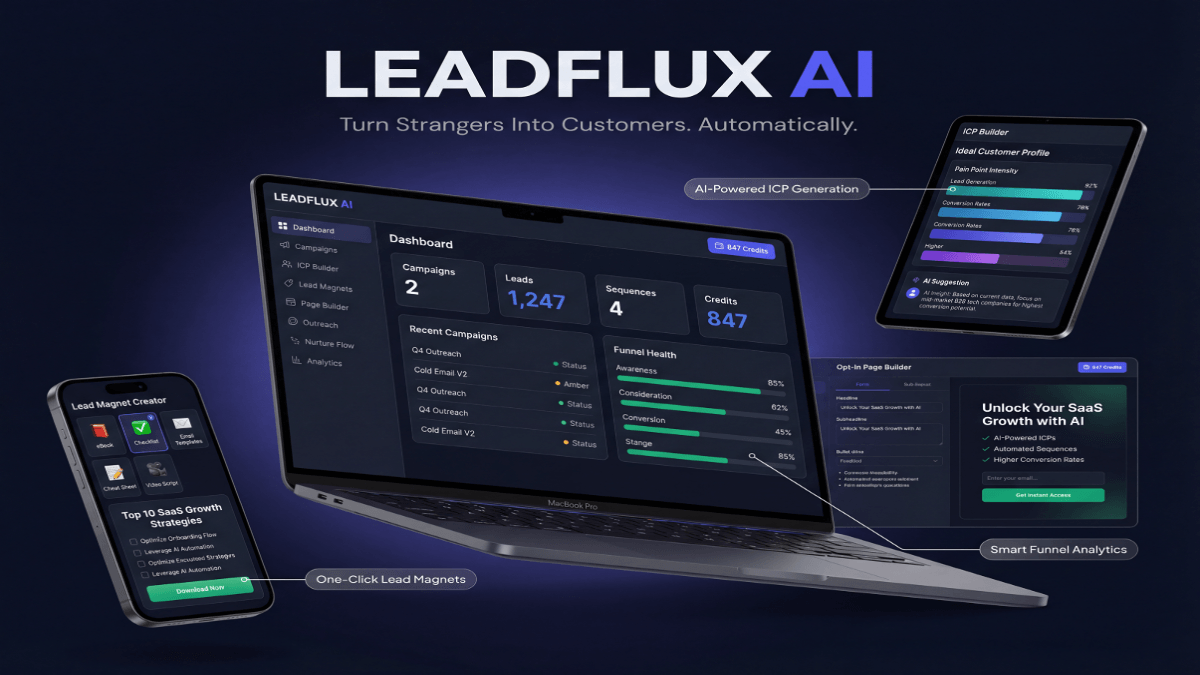

The most effective marketers today build a smarter lead generation funnel using automation rather than relying on manual outreach alone.

List decay happens naturally at approximately 22-25% annually as people change jobs, abandon email addresses, or update their contact information. Weekly monitoring helps you spot accelerated decay that might indicate data quality problems from specific lead sources. If your bounce rate suddenly spikes, investigate recent list additions, purchased lists, or integration issues that might be introducing bad data into your workflows.

Track your spam complaint rate alongside delivery metrics, aiming to stay below 0.1% of total sends. Even small increases in spam complaints damage sender reputation with email service providers, reducing deliverability across your entire domain. When complaints rise, examine the specific workflows generating reports and audit whether you’re honoring unsubscribe requests promptly, setting accurate expectations during signup, and maintaining appropriate send frequency.

Workflow Conversion Rate by Stage and Segment

Overall workflow conversion rate tells you whether prospects complete your desired action, but stage-by-stage analysis reveals where sequences lose momentum. Calculate conversion rates between each step in your automation to identify specific friction points. A welcome series might show 45% open rates on message one, 32% on message two, and 8% on message three—signaling that the second email creates drop-off worth investigating.

Segment your conversion analysis by key audience characteristics including traffic source, company size, industry, and engagement history. The same workflow often performs dramatically differently across segments. Enterprise prospects might convert at 12% while small businesses hit 28%, suggesting you need separate workflows tailored to each audience’s decision-making process and timeline.

Benchmark your conversion rates against previous periods to establish normal performance ranges for each workflow. Create simple upper and lower control limits based on historical data—when current performance falls outside these ranges, it triggers deeper investigation. This approach prevents you from overreacting to normal statistical variation while ensuring you catch genuine performance shifts quickly.

Pay special attention to micro-conversions that indicate engagement even when prospects don’t complete the primary goal. Click-through rates, content downloads, page visits, and social shares demonstrate interest and help you understand which messages resonate. Workflows with strong micro-conversion rates but weak final conversions often need adjustments to their calls-to-action or offer positioning rather than complete redesigns.

Lead Response Time and Follow-Up Velocity

Research consistently shows that contacting leads within five minutes of inquiry increases conversion likelihood by 400% compared to waiting thirty minutes. Your weekly audit should measure average response time from trigger event to first automated touchpoint. Marketing automation exists specifically to eliminate human delay, so any workflow taking more than two minutes to initiate after a qualifying action needs immediate attention.

Beyond initial response, track the cadence and consistency of your follow-up sequences. Calculate the average time between touches in multi-step workflows and ensure spacing aligns with your sales cycle length and buyer urgency. B2B enterprise solutions might space touches 3-5 days apart, while high-velocity transactional products perform better with daily or twice-daily sequences during active buying windows.

Measure what percentage of leads receive all planned touches versus those who exit workflows early through conversions, unsubscribes, or meeting other exit criteria. Low completion rates might indicate sequences that are too long, too aggressive, or poorly matched to actual buyer behavior. High completion rates without conversions suggest your workflow isn’t compelling action and needs stronger offers or clearer value propositions.

Monitor the percentage of workflows that hand off to sales at the optimal time based on lead scoring thresholds and behavioral triggers. Premature handoffs waste sales resources on unqualified prospects, while delayed handoffs let hot leads cool. Weekly review of these transitions helps you refine the criteria that determine when automation should yield to human intervention.

Revenue Attribution and Pipeline Contribution

Connecting automation workflows to actual revenue validates their business impact and justifies continued investment. Track first-touch, last-touch, and multi-touch attribution for deals influenced by automated campaigns. Each attribution model tells a different story about workflow effectiveness—first-touch highlights top-of-funnel performance, last-touch shows closing effectiveness, and multi-touch reveals the compound impact of nurture sequences.

Calculate the pipeline value generated by each major workflow weekly, comparing it to the time and resources invested in creation and optimization. This cost-per-pipeline-dollar metric helps you prioritize which workflows deserve more development attention and which should be deprecated. Workflows generating less than $3 in pipeline for every $1 invested typically need significant improvements or should be eliminated.

Measure the average deal size for opportunities influenced by automation versus those that convert through other channels. Marketing automation often accelerates smaller deals while providing essential education for larger opportunities that ultimately close through relationship selling. Understanding this dynamic helps set realistic expectations and prevents unfair comparisons between automated and high-touch sales processes.

Track the sales cycle length for automation-influenced deals compared to your baseline. Effective nurture workflows should educate prospects and address objections proactively, compressing the time from first contact to closed deal. If automated leads take significantly longer to close than other sources, your sequences might be attracting lower-quality prospects or creating confusion rather than clarity about your solution.

Engagement Decay and Re-Engagement Effectiveness

Every workflow experiences natural engagement decay as subscribers lose interest, change roles, or achieve their initial goals. Monitor the percentage of subscribers who stop engaging with your emails over time, defining engagement as opens, clicks, or other measurable interactions within the past 30 days. When engagement rates drop below 15-20% of your active list, you’re sending messages to audiences who no longer care, damaging deliverability and wasting resources.

Create specific re-engagement workflows triggered when subscribers show declining activity patterns. Test different re-activation approaches including preference updates, exclusive content offers, direct value questions, and clean unsubscribe prompts. Measure what percentage of declining subscribers each approach successfully reactivates versus how many permanently disengage, then allocate effort toward the highest-performing tactics.

Track your email list growth rate net of unsubscribes and engagement-based suppressions. Healthy lists grow 2-5% monthly after accounting for natural attrition, indicating strong top-of-funnel performance and effective value delivery that minimizes churn. Stagnant or shrinking lists signal fundamental problems with acquisition strategy, content relevance, or send frequency that weekly monitoring helps surface before they become critical.

Measure the percentage of your database marked as “engaged” according to your defined criteria and watch for gradual erosion. Set a minimum engagement threshold—perhaps any interaction in the past 60 days—and automatically suppress contacts who fall below it. This practice protects deliverability while creating urgency to improve content quality and relevance for remaining subscribers.

Technical Performance and Workflow Reliability

Marketing automation relies on numerous technical integrations, data flows, and conditional logic branches that can break silently without proper monitoring. Check for workflow errors, failed integrations, and stuck contacts weekly to ensure your automation actually executes as designed. Even simple workflows involve dozens of potential failure points from API changes, field mapping errors, or permission issues.

Review the number of contacts in each workflow status—active, completed, exited early, and waiting in delays. Unusual accumulations in waiting statuses might indicate broken triggers or logic errors preventing progression. Large numbers of early exits could signal overly aggressive unsubscribe prompts, poor targeting that sends irrelevant messages, or technical problems removing contacts inappropriately.

Monitor send time distribution to verify contacts enter workflows and receive messages during optimal windows. Many automation platforms allow time-of-day controls, but configuration errors can result in midnight sends or weekend blasts that generate poor engagement. Weekly spot checks of actual send times versus intended schedules catch these issues before they significantly impact performance.

Test your workflows end-to-end at least monthly by creating test contacts that move through entire sequences. Automated testing catches broken links, rendering problems across email clients, personalization failures, and logic errors that might only affect specific segments. Document test results and track the number of issues found per workflow as a quality metric that should trend downward over time as you improve development practices.

Content Performance and Message Optimization Opportunities

Individual message performance within workflows reveals what resonates with your audience and what falls flat. Track open rates, click rates, and click-to-open rates for each email in your sequences. Click-to-open rate proves particularly valuable because it measures engagement quality among people who actually saw your message, removing deliverability issues from the equation and focusing on content effectiveness.

Compare subject line performance across similar messages to identify patterns in what drives opens. Test variables like length, personalization, urgency, curiosity, and value propositions systematically. Build a swipe file of your highest-performing subject lines categorized by workflow type and audience segment, creating templates that accelerate future campaign development while maintaining quality standards.

Analyze which specific links within emails generate the most clicks and conversions. Heat mapping tools show exactly where subscribers engage, revealing whether they respond to primary calls-to-action, secondary offers, or unexpected content elements. This data informs both email layout optimization and content strategy by showing what topics and offers genuinely interest your audience versus what you hope they’ll care about.

Review the performance of different content formats including plain text versus HTML, long-form versus short messages, and image-heavy versus text-focused emails. Testing these variables systematically builds understanding of your audience’s preferences and consumption patterns. Some segments prefer detailed educational content while others respond better to brief, action-oriented messages, and weekly monitoring reveals these preferences through behavioral data rather than surveys.

Implementing Your Weekly Audit Process for Sustained Improvement

Establishing a consistent audit routine transforms these eight metrics from theoretical best practices into practical performance drivers. Schedule a recurring 60-90 minute block every week specifically for workflow review, treating it with the same priority as client meetings or sales calls. Consistency matters more than perfection—an adequate audit performed weekly outperforms a comprehensive analysis done quarterly.

Create a standardized dashboard that displays all eight metric categories in a single view, eliminating the need to navigate multiple platform screens during reviews. Most automation platforms support custom reporting, and third-party analytics tools can aggregate data from multiple sources. Your dashboard should highlight week-over-week changes and flag metrics that fall outside normal ranges, directing attention toward issues that need investigation.

Document your findings and actions in a shared audit log that creates institutional memory and prevents duplicated effort. Note what you investigated, what you changed, and what results you expect from optimizations. This historical record proves invaluable when new team members join, when explaining performance trends to stakeholders, and when deciding whether specific tactics deserve continued testing or should be abandoned.

Building this disciplined approach to performance monitoring creates compound advantages over time. You develop intuition about normal performance ranges, spot anomalies faster, and build a library of proven optimizations that can be applied to new workflows. Teams that commit to weekly audits don’t just see the promised 40% improvement—they establish capabilities that drive continuous advancement long after implementing initial best practices.