Marketing automation platforms promise seamless efficiency, but a single integration failure can corrupt your entire lead database, send duplicate emails to prospects, or disconnect your sales team from critical customer data. The cost of these failures extends beyond lost revenue—they erode trust with customers who receive irrelevant communications and frustrate internal teams who can’t access accurate information when they need it most. Learn more about platform migration guide.

Integration testing isn’t optional maintenance work that happens after implementation. It’s a strategic safeguard that prevents the cascading failures that plague marketing operations teams. When your CRM doesn’t sync properly with your email platform, or your webinar tool fails to pass registration data to your nurture sequences, every subsequent marketing decision gets built on faulty intelligence. Learn more about workflow performance metrics.

This comprehensive checklist provides a systematic approach to testing every critical integration point in your marketing automation stack. By following these seven steps, you’ll identify vulnerabilities before they impact customer experience, maintain data integrity across platforms, and ensure your automation workflows execute exactly as designed. Learn more about tagging strategy.

Establish Baseline Data Mapping Standards Before Integration

The foundation of successful integration testing starts with crystal-clear documentation of how data should flow between systems. Before connecting any platforms, create a comprehensive field mapping document that defines exactly which data points sync, in which direction, and with what frequency. This reference document becomes your testing standard and troubleshooting guide when discrepancies emerge. Learn more about data hygiene protocol.

Identify every custom field in your marketing automation platform and determine its corresponding field in connected systems. Generic field names like “Company” might map to “Account Name” in your CRM or “Organization” in your webinar platform. Document these mappings with absolute specificity, including data type requirements, character limits, and acceptable values. A text field in one system may need to become a picklist value in another, requiring transformation rules that your integration must handle correctly. Learn more about workflow mapping tools.

Define your source of truth for each data element. When contact information exists in multiple systems, conflicts inevitably arise. Decide in advance whether your CRM, marketing automation platform, or another tool serves as the authoritative source for email addresses, phone numbers, job titles, and other key fields. This decision determines sync direction and conflict resolution rules that prevent data overwrites from destroying valuable information.

Create bidirectional sync rules only when absolutely necessary. Most marketing operations benefit from unidirectional data flows that reduce complexity and failure points. Your marketing automation platform might push engagement data to your CRM while pulling contact updates in return, but avoid creating circular update patterns where changes in either system trigger changes in the other. These loops create timestamp conflicts and race conditions that corrupt records unpredictably.

Configure Test Environments That Mirror Production Systems

Testing integrations in production environments introduces unacceptable risk to your live data and active campaigns. Establish dedicated sandbox or staging environments for both your marketing automation platform and connected systems before running any integration tests. These environments should replicate your production configuration as closely as possible, including custom fields, workflows, and user permissions.

Populate test environments with representative sample data that covers edge cases and common scenarios. Include contacts with incomplete information, unusually long field values, special characters in names, international phone number formats, and multiple email addresses. Real-world data rarely fits perfect templates, so your test data should reflect the messy reality your integrations will encounter daily.

Verify that your test environment API connections use separate credentials from production. Many integration failures occur when test configurations accidentally write to production databases or trigger live campaigns. Distinct API keys, webhooks, and connection endpoints ensure that even catastrophic test failures remain isolated from customer-facing systems.

Document the specific differences between test and production environments. Some platforms limit API call volumes in sandbox environments, or disable certain features that work differently in testing mode. Understanding these limitations helps you interpret test results accurately and plan for production behavior that may diverge from testing observations.

Test Individual Integration Touchpoints Systematically

Break complex integrations into discrete testable components before evaluating the complete data flow. Each integration typically involves multiple touchpoints—initial sync, ongoing updates, deletions, and specific trigger events. Testing these elements individually isolates problems and accelerates troubleshooting when issues surface.

Start with manual single-record tests that verify basic connectivity. Create a new contact in your source system and confirm it appears in the destination platform within the expected timeframe. Check that every mapped field transfers correctly with appropriate data types and formatting. A phone number should arrive as a phone number, not converted to scientific notation. A multi-select picklist should preserve all selected values, not just the first option.

| Integration Touchpoint | Test Scenario | Expected Result | Common Failure Mode |

|---|---|---|---|

| New Record Creation | Add contact in CRM | Contact appears in automation platform within 5 minutes | API rate limiting delays sync |

| Field Updates | Change email address | Updated email syncs bidirectionally | Conflict rules overwrite newer data |

| Record Deletion | Delete contact from source | Contact marked inactive in destination | Hard delete orphans related records |

| Bulk Import | Upload 1000 test records | All records sync with zero errors | Character encoding breaks special characters |

| Custom Field Sync | Update dropdown value | Picklist value matches exactly | Case sensitivity creates duplicate values |

| Activity Logging | Send email from automation | Activity appears in CRM timeline | Activity type mapping incorrect |

Test update scenarios with the same rigor as initial creation. Modify existing records in both systems and verify that changes flow in the correct direction without overwriting fields that should remain static. Update timestamps should advance properly, and audit logs should capture who made changes and when. Integration conflicts often emerge most clearly during updates rather than initial syncs.

Validate deletion and deactivation workflows carefully. When a contact unsubscribes or a lead gets disqualified, that status must propagate correctly across all systems. Test hard deletes versus soft deletes, and confirm that related records like activity history and campaign membership handle deletions appropriately. A deleted contact in your CRM shouldn’t remain active in nurture campaigns or continue receiving communications.

Validate Automation Trigger Reliability Under Load

Marketing automation integrations frequently depend on triggers—specific events that initiate data syncs or workflow actions. A form submission might trigger a contact creation, while a status change could launch a nurture sequence. These triggers must fire reliably under realistic data volumes, not just in controlled single-event tests.

Execute volume testing that simulates realistic operational loads. If your weekly webinar typically generates 300 registrations, test whether your integration handles 350 simultaneous form submissions without dropping records or triggering duplicate entries. API rate limits become critical constraints during high-volume events, and integrations that work perfectly for individual records may fail spectacularly during bulk operations.

Monitor trigger execution timing and sequence dependencies. Some workflows require multiple systems to complete actions in specific order—a contact must be created before they can be added to a campaign, which must exist before the enrollment can process. Test whether timing delays or system latencies disrupt these sequences, causing workflows to fail midstream or execute actions out of order.

Verify error handling and retry logic when triggers fail. Production environments experience temporary outages, network interruptions, and API timeouts regularly. Your integration should detect failed trigger events and retry them appropriately rather than silently dropping data. Test how your system responds to deliberate failures by temporarily disconnecting network access or invalidating API credentials during active syncs.

Confirm that trigger conditions evaluate correctly across all scenarios. A workflow triggered by “lead score exceeds 50” must fire when scores increment past that threshold but not when they decrease then increase again unless specifically designed to do so. Test boundary conditions, null values, and edge cases that may not trigger workflows as intended despite meeting literal criteria.

Monitor Data Consistency Across Complete Customer Journeys

Individual integration touchpoints might test successfully while the complete customer journey still experiences data fragmentation. End-to-end testing validates that information remains consistent as contacts progress through multi-step campaigns that span multiple integrated platforms.

Map complete customer journeys that touch every integrated system. A typical B2B journey might include form submission on your website, entry into a nurture campaign in your automation platform, webinar registration in a third-party tool, attendance tracking, follow-up email sequences, sales outreach logged in your CRM, and opportunity creation. Each transition point between systems represents a potential failure point where data can fragment or become inconsistent.

Create test personas that execute these complete journeys from start to finish. Follow a test contact through every stage, verifying at each step that all previous data persists correctly and new information appends appropriately. Check that engagement history, source attribution, and behavioral data accumulate coherently rather than creating disconnected activity records across systems.

Validate data consistency across different reporting views. The same contact should show identical information whether viewed in your marketing automation platform, CRM, analytics dashboard, or any other integrated tool. Discrepancies indicate sync failures, field mapping errors, or timing issues that compromise data integrity. Run regular reconciliation reports that compare record counts and field values across systems.

Test parallel journeys where contacts exist in multiple campaigns simultaneously. Real prospects often engage with several marketing initiatives at once—downloading content while registered for a webinar and being nurtured through an email sequence. Verify that parallel activities all sync correctly without creating conflicts, duplicate records, or race conditions where simultaneous updates corrupt data.

Implement Continuous Monitoring and Alert Systems

Integration testing isn’t a one-time implementation task but an ongoing monitoring requirement. Systems change, APIs get updated, data volumes fluctuate, and previously stable integrations can fail without warning. Continuous monitoring detects problems before they cascade into major data quality issues.

Establish baseline metrics for normal integration performance. Track average sync times, daily record volumes, error rates, and API call consumption. These baselines help you identify anomalies quickly—a sudden spike in sync errors or dramatic slowdown in processing times often indicates emerging problems that require immediate investigation.

Configure automated alerts for critical integration failures. Real-time notifications when sync jobs fail, error rates exceed thresholds, or specific workflows stop executing enable rapid response before problems compound. Alert fatigue is real, so calibrate thresholds carefully to surface genuinely problematic issues while filtering routine variations.

Schedule regular automated test transactions that validate integration health. Synthetic monitoring creates test records on predetermined schedules and verifies they sync correctly end-to-end. These proactive checks detect integration degradation before it impacts real customer data, providing early warning of authentication expirations, API changes, or configuration drift.

Review integration logs and error reports systematically. Even integrations that appear functional may generate intermittent errors that eventually cause major failures. Weekly log reviews identify patterns—specific record types that consistently fail, particular times when errors spike, or gradual increases in processing times that foreshadow capacity problems.

Document Recovery Procedures and Rollback Strategies

Despite thorough testing, integration failures will eventually occur in production. Documented recovery procedures minimize damage and accelerate restoration of normal operations when problems emerge. Your integration testing process should include validating these recovery mechanisms before you need them urgently.

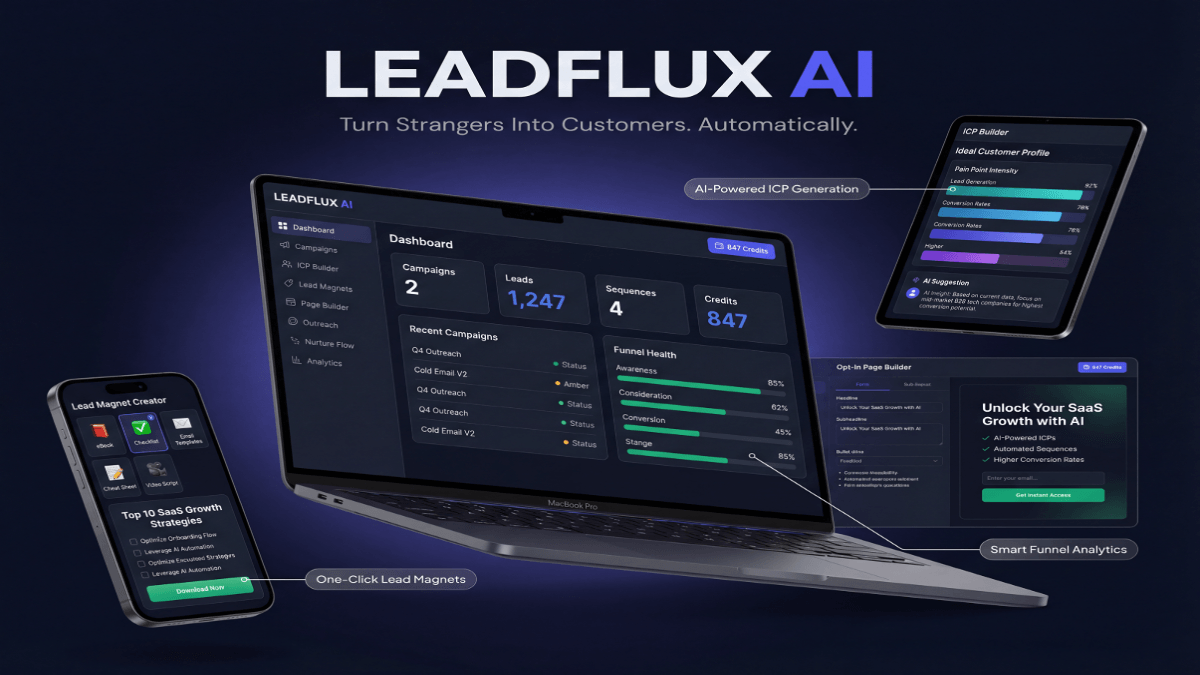

I’ve found that implementing LeadFlux AI for lead scoring has dramatically reduced the time our sales team spends chasing unqualified prospects, allowing them to focus on leads that are actually ready to convert.

Create detailed runbooks for common failure scenarios. Document exactly how to pause sync jobs, roll back problematic changes, restore data from backups, and resume normal operations once issues resolve. These procedures should be executable by team members who didn’t design the original integration, with step-by-step instructions that assume no specialized knowledge.

Establish data backup and restoration capabilities before integration problems occur. Regular backups of both systems involved in integrations enable recovery when sync failures corrupt records or overwrite valuable data. Test restoration procedures periodically to verify that backups capture necessary information and can be restored within acceptable timeframes.

Define clear escalation paths and responsible parties for different severity levels. Minor sync delays might require only monitoring, while data corruption demands immediate intervention from senior technical resources. Document who gets notified at each escalation level, what authority they have to make emergency changes, and how to engage vendor support when needed.

Test your rollback procedures in staging environments. Deliberately create integration failures, then execute your documented recovery steps to verify they work as intended. This testing reveals gaps in procedures, identifies missing permissions or access requirements, and builds team confidence in their ability to recover from real incidents.

Marketing automation integration testing protects the data foundation that powers your entire revenue engine. By systematically validating each integration touchpoint, monitoring ongoing performance, and preparing for inevitable failures, you transform integrations from fragile connection points into reliable infrastructure. The seven-step checklist presented here provides a repeatable framework for preventing the data sync failures that undermine marketing effectiveness and erode customer trust. Implement these practices consistently, and your integrated marketing stack becomes a competitive advantage rather than an operational liability.