Why Most Proactive Chat Setups Fail Before the First Message

Proactive chat has the potential to be your highest-converting lead generation tool, but only when the mechanics are right. Most businesses install a chat widget, set a generic time-based trigger, and wonder why visitors dismiss the popup without engaging. The problem is not the chat software itself — it is the guesswork involved in placement, timing, and trigger logic that undermines results before a single conversation begins. Learn more about scroll-triggered CTA design tests.

The difference between a chat widget that generates qualified leads and one that annoys visitors comes down to systematic testing. Treating your chat setup like a landing page — something to be iterated, measured, and refined — is the mindset shift that separates high-performing teams from those stuck at a two percent engagement rate. Experimentation is not optional; it is the entire strategy. Learn more about popup timing and entry trigger testing.

Over the course of ongoing optimization work with B2B and eCommerce clients, nine specific experiments consistently moved the needle on proactive chat conversions. Some results were incremental, others were dramatic. What every experiment shared was a clear hypothesis, a measurable outcome, and a repeatable process you can apply to your own setup starting today. This post breaks down each one in detail so you can adapt and deploy them without starting from scratch. Learn more about CTA placement performance data.

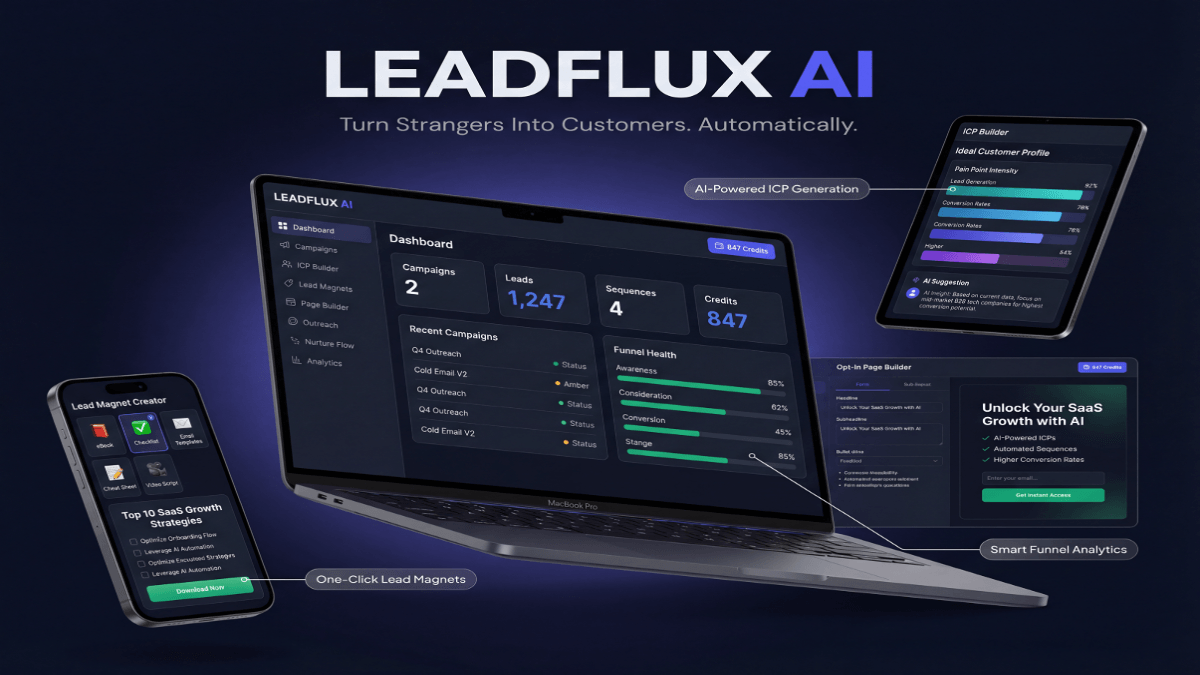

The most effective marketers today build a smarter lead generation funnel using automation rather than relying on manual outreach alone.

Experiments 1 Through 3: Placement and Visual Positioning Tests

The first experiment tested the classic bottom-right widget placement against a bottom-left position across a high-traffic SaaS pricing page. Conventional wisdom defaults to bottom-right because it mirrors the natural reading flow, but bottom-left placement saw a 34 percent lift in click-through rate on that specific page. The reason: the page’s primary CTA button sat in the bottom-right quadrant, and the competing visual elements were cannibalizing each other’s attention. Learn more about live chat conversation workflows.

The second experiment examined widget size and icon style. Replacing a generic speech bubble icon with a real agent photo increased proactive chat open rates by 27 percent in the first two weeks of the test. Human faces trigger psychological trust responses that abstract icons simply cannot replicate. Even a small, circular avatar in the chat launcher communicates that a real person is available, which lowers the perceived barrier to starting a conversation. Learn more about chatbot lead qualification scripts.

The third experiment looked at widget visibility on mobile devices specifically. A sticky, full-width chat bar anchored to the bottom of mobile screens outperformed the standard floating bubble by 41 percent on engagement rate for mobile visitors. Mobile users scroll with their thumbs and naturally pause at the bottom of the screen — a bar format meets them exactly where their attention already lands. If you are running separate desktop and mobile experiences for your chat widget, this distinction alone can produce meaningful conversion gains.

One important takeaway from all three placement experiments: page-level context matters more than site-wide defaults. The winning placement on a pricing page was different from the winner on a blog post or a product demo page. Segment your widget placement by page type rather than applying a single configuration universally, and you will immediately unlock placement performance that a one-size-fits-all approach leaves on the table.

Experiments 4 Through 6: Trigger Logic and Timing Refinements

Experiment four moved away from time-on-page triggers and toward scroll-depth triggers on long-form content pages. Instead of firing a proactive message after thirty seconds, the trigger was set to activate when a visitor scrolled past sixty percent of the page. Engagement rate jumped from 4.2 percent to 9.7 percent on those pages because the trigger fired when visitors had already demonstrated genuine interest rather than simply landing and hesitating. Scroll-depth signals intent in a way that time alone never can.

Experiment five tested exit-intent proactive chat against the scroll-depth trigger on product pages with high drop-off rates. Exit-intent triggers — firing when the mouse moves toward the browser’s close or back button — produced a 19 percent recovery rate among visitors who would have otherwise left without engaging. The message used was not a discount offer but a direct question: “Before you go, is there anything stopping you from moving forward today?” This open-ended, curiosity-based prompt outperformed promotional messages by a factor of two.

Experiment six stacked multiple behavioral signals to create a compound trigger. Instead of triggering on a single condition, the proactive message fired only when a visitor had spent more than ninety seconds on the page, scrolled past fifty percent, and visited at least two pages in the same session. This compound trigger reduced overall proactive chat volume by 38 percent but increased the lead conversion rate of those conversations by 61 percent. Fewer, better-qualified interactions produced dramatically better downstream outcomes for the sales team.

The broader lesson from these three experiments is that most default trigger settings are built for volume, not quality. Platforms set conservative, broad triggers so that the software appears active and the dashboard metrics look impressive. Tightening your trigger logic with behavioral intent signals filters for visitors who are genuinely in a decision-making moment, and those are the conversations your team actually wants to be having.

Tightening trigger logic with compound behavioral signals reduced proactive chat volume by 38% — but increased lead conversion rate by 61%. Fewer conversations, dramatically better results.

Experiments 7 and 8: Message Copy and Personalization Variables

Experiment seven tested three proactive message formats on a B2B software demo request page: a feature-focused opener (“Did you know we integrate with Salesforce?”), a pain-point opener (“Struggling to get your team to adopt new tools?”), and a curiosity-gap opener (“Most visitors on this page have one question we can answer in two minutes — want to hear it?”). The curiosity-gap opener generated 2.3 times more replies than the feature-focused message and 1.6 times more than the pain-point opener. Curiosity, when framed credibly, is a more powerful engagement mechanism than leading with product claims.

Experiment eight introduced referral-source personalization into the proactive message. Visitors arriving from paid search ads saw a message referencing the search intent implied by the ad (“You searched for [product category] — here is what most buyers want to know before deciding”). Visitors from email campaigns saw messages that acknowledged the email context without being intrusive. Personalizing the first message based on traffic source produced a 44 percent improvement in reply rate compared to a universal default message shown to all visitors regardless of origin.

What made both experiments work was the underlying principle of relevance over reach. Generic messages cast a wide net but catch very little because they require visitors to do the cognitive work of self-identifying as the intended audience. Personalized, context-aware messages do that work for the visitor, which lowers friction and accelerates engagement. Modern chat platforms offer referral-source variables, URL parameters, and CRM data integrations that make this level of personalization achievable without complex engineering.

One additional finding from experiment eight: personalized messages also improved the quality of the conversation that followed. When visitors responded to a source-specific message, they were more likely to provide specific information about their situation rather than giving vague, exploratory responses. This meant the sales team received higher-quality context from the first reply, shortening the time from initial contact to qualified lead status by an average of one and a half conversation turns.

Experiment 9: The Full-Funnel Chat Experience Test

The ninth experiment was the most comprehensive and the most impactful. Instead of optimizing individual elements in isolation, this test designed a coordinated chat experience across the full visitor journey — from first landing page visit through pricing page exploration to demo request confirmation. Each stage of the funnel had its own widget placement, trigger logic, and message copy tailored to where the visitor was in their decision process.

Top-of-funnel pages used scroll-depth triggers with curiosity-gap messages designed to surface pain points and begin qualification. Mid-funnel pages — feature pages, comparison pages, and integration listings — used compound behavioral triggers with messages directly referencing the page topic. Bottom-of-funnel pages like pricing and demo request pages used exit-intent triggers with high-urgency, low-friction prompts that offered a direct path to a human conversation rather than another self-serve resource.

The coordinated funnel approach doubled proactive chat conversions compared to the baseline single-trigger setup that had been running before the experiment began. More importantly, it reduced the average number of chat interactions needed before a lead was ready to speak with sales. Visitors who experienced the full-funnel chat journey converted to scheduled demos at a rate 78 percent higher than those who encountered only a single, untargeted proactive message. The compounding effect of consistent, contextually relevant touchpoints throughout the session created a materially different visitor experience.

Implementing this approach requires mapping your visitor journey before configuring any chat settings. Identify the three to five most important pages in your conversion path, define what a visitor at each stage is likely thinking or questioning, and build your trigger logic and message copy to meet those specific needs. This is not a one-afternoon project, but the setup investment pays compounding returns because every future visitor benefits from the optimized experience without additional effort from your team.

Building Your Own Testing Roadmap for Proactive Chat

Running these experiments without a structured testing framework produces noise rather than insight. The foundation of any reliable chat optimization program is defining a single primary metric for each test — whether that is proactive message open rate, reply rate, lead capture rate, or downstream demo conversion — and resisting the temptation to optimize for multiple outcomes simultaneously. Clean metrics produce clear decisions.

Start with placement and trigger tests because they require no copywriting skills and produce results quickly. Placement and timing changes can be deployed in minutes and typically reach statistical significance within two weeks on a moderately trafficked site. Once you have established a high-engagement baseline through placement and trigger optimization, layer in copy and personalization experiments where the nuance of language can be properly isolated from mechanical variables.

Document every experiment in a shared log that captures the hypothesis, the control setup, the variant setup, the traffic split, the duration, and the results. This documentation becomes a competitive asset over time. Teams that maintain rigorous experiment records can onboard new members faster, avoid re-testing hypotheses that have already been resolved, and identify patterns across experiments that inform future testing priorities. Institutional knowledge about what works on your specific site and with your specific audience is irreplaceable.

Finally, treat your proactive chat setup as a living system rather than a configuration you finish and forget. Visitor behavior shifts as your traffic sources change, your product evolves, and your competitive landscape shifts. Schedule a quarterly audit of your trigger logic, placement decisions, and message copy to ensure your setup reflects current visitor intent rather than assumptions made months or years ago. The teams that maintain this discipline consistently outperform those that optimize once and consider the work complete. Proactive chat is not a feature you turn on — it is a performance channel you actively manage.