Cold email campaigns live or die in the inbox, and your subject line is the only thing standing between a potential customer and the trash folder. Even the most compelling offer, the most personalized message, and the most valuable proposition mean absolutely nothing if your email never gets opened. Subject line testing transforms cold email from guesswork into a predictable system, giving you the data you need to consistently improve open rates and turn cold prospects into warm conversations. Learn more about email A/B testing strategy.

Most sales professionals send the same subject lines repeatedly, hoping for different results while their open rates stagngle at 15-20%. The difference between mediocre and exceptional cold email performance often comes down to systematic subject line testing that reveals what actually resonates with your specific audience. Smart testing protocols can double or even triple your open rates, creating more opportunities from the same outreach effort. Learn more about email subject line psychology.

This framework will show you exactly how to structure subject line tests that generate actionable insights, avoid common testing mistakes that waste time and leads, and build a library of proven subject lines that consistently outperform generic approaches. You’ll learn the specific testing methodologies that B2B companies use to achieve 40%+ open rates and transform cold outreach into a reliable pipeline generator. Learn more about subject line formulas.

The Science Behind Subject Line Testing Frameworks

Subject line testing requires a structured approach that isolates variables and produces statistically significant results. Random testing without methodology wastes your prospect list and teaches you nothing actionable. The foundation of effective testing starts with understanding that you need minimum sample sizes to draw valid conclusions—typically at least 100 sends per variant to account for natural variation in open rates. Learn more about email segmentation strategies.

The most reliable testing framework uses A/B splits where you change only one element at a time. Testing subject line length against personalization against curiosity hooks simultaneously tells you nothing about which specific element drove results. Disciplined testers modify one variable, measure the impact, implement the winner, then test the next variable against the new control.

Statistical significance matters more than most marketers realize. A subject line that performs 3% better across 50 sends might simply be noise, while a 3% improvement across 500 sends represents a real pattern worth implementing. Calculate confidence intervals using basic statistical tools before declaring a winner, and never stop testing after a single victory—preferences shift as markets evolve.

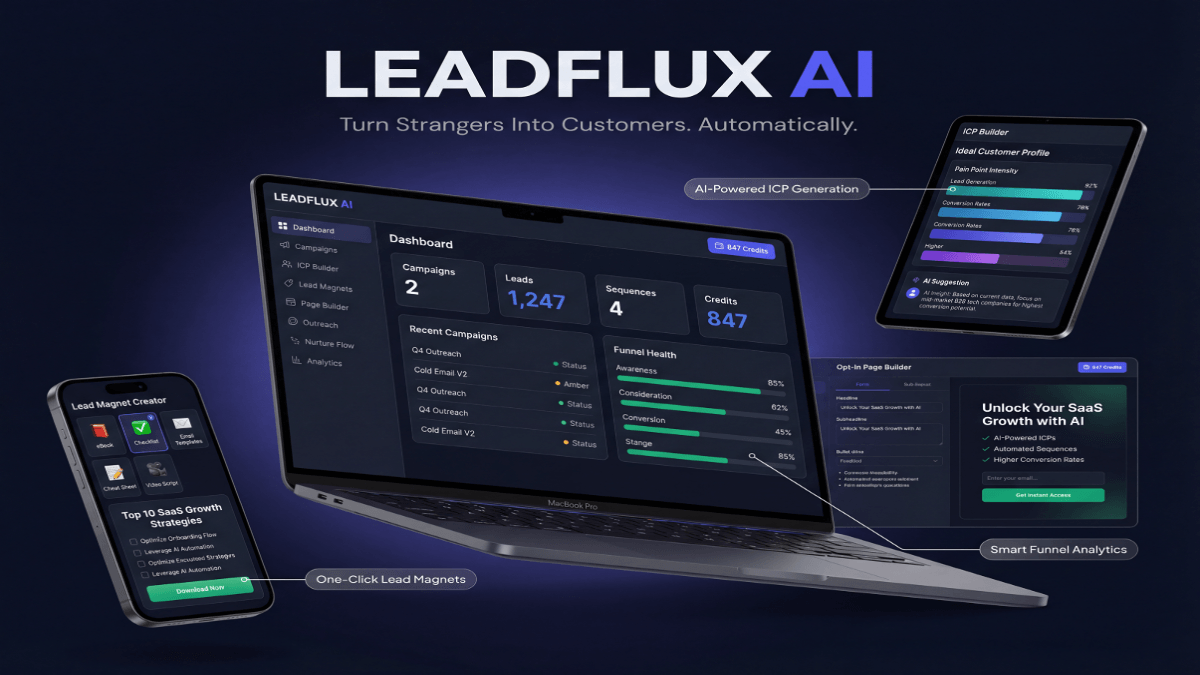

I’ve found that implementing LeadFlux AI for lead scoring has cut our qualification time in half by automatically prioritizing prospects based on engagement patterns and behavioral signals.

Control groups provide the baseline that makes all testing meaningful. Keep sending your current best-performing subject line to a portion of your list while testing new variants against it. This approach accounts for external factors like day of week, time of send, and seasonal variations that could artificially inflate or deflate your test results.

Essential Testing Variables to Prioritize

Length testing reveals optimal character counts for your specific audience. Some segments respond to concise 3-4 word subject lines that create urgency, while others prefer descriptive 8-10 word subjects that clearly communicate value. Test progressively shorter and longer variants against your control to find the sweet spot where open rates peak.

Personalization level testing determines how much customization drives engagement without triggering skepticism. Test company name insertion versus role-based personalization versus first name usage versus no personalization at all. Many senders discover that moderate personalization outperforms heavy customization, which can feel manipulative when prospects know you’re sending cold emails.

Curiosity versus clarity represents perhaps the most important testing axis. Curiosity-driven subjects like “Quick question about [Company]” generate opens through intrigue, while clarity-focused subjects like “Reducing customer acquisition costs by 30%” lead with value. Test both approaches systematically—the winner varies dramatically by industry, seniority level, and company size.

Building Your Testing Calendar

Weekly testing cadences work best for most B2B cold email programs. Launch one new test every week, measure results over seven days, implement the winner, then start the next test. This rhythm generates 52 optimization cycles annually while maintaining enough volume per test to reach statistical significance.

Monthly analysis sessions turn individual test results into strategic insights. Review all tests from the previous month together, looking for patterns across multiple experiments. You might discover that questions outperform statements, that numbers beat adjectives, or that industry-specific terminology drives higher engagement than general business language.

Quarterly comprehensive reviews identify macro trends and inform messaging strategy. Aggregate six months of testing data to understand how your audience preferences evolve, which subject line families consistently perform, and where you should focus future testing efforts. This strategic view prevents you from optimizing tactics while missing larger patterns.

Subject Line Categories That Drive Cold Email Opens

Question-based subject lines tap into natural human psychology that compels people to seek answers. When you ask “Are you struggling with [specific problem]?” the recipient mentally engages with the question before deciding whether to open. Test different question types—yes/no questions versus open-ended questions versus rhetorical questions—to determine which format resonates with your audience.

Value proposition subjects lead with concrete benefits that immediately communicate what the recipient gains. Subject lines like “3 ways to cut onboarding time in half” or “The tool that saves product teams 10 hours weekly” work when your offer delivers clear, quantifiable value. Test specific numbers against ranges, percentages against absolute values, and time savings against cost savings to optimize this approach.

Pattern interrupt subjects break through inbox noise by violating expectations in productive ways. Unconventional approaches like “I need your help” or “This might not be relevant” can generate curiosity opens when used strategically. Test pattern interrupts carefully—they burn out quickly if overused and can damage credibility if the email body doesn’t justify the unusual subject.

Social proof subjects leverage authority and validation to drive opens. References to mutual connections, recent funding rounds, or industry recognition signal legitimacy before the email opens. Test different proof types against your baseline to discover whether prospects respond more to peer validation, authority figures, or company achievements.

Personalization Tokens That Actually Work

Company name personalization remains effective when deployed strategically. Subject lines that reference the prospect’s company show you’ve done basic research and aren’t mass blasting. Test placement of company names at the beginning versus middle versus end of subject lines, and measure whether abbreviated names perform differently than full company names.

Role-specific customization demonstrates understanding of the recipient’s responsibilities and challenges. Subject lines crafted specifically for CFOs, VP of Sales, or Product Managers signal relevance immediately. Build role-specific subject line variants and test them against generic alternatives to quantify the value of this additional segmentation.

Trigger event references create timely relevance that generic subjects can’t match. Mentioning a recent product launch, executive hire, or funding announcement proves you’re paying attention. Test how recent triggers need to be—does a one-week-old announcement still drive engagement, or do you need to reference events from the past 48 hours?

Advanced Testing Methodologies for Sophisticated Campaigns

Multivariate testing expands beyond simple A/B comparisons when you have sufficient volume to support more complex experiments. Test three or four subject line variants simultaneously, ensuring each variant gets enough sends to produce reliable data. This approach accelerates learning but requires larger prospect lists and more sophisticated tracking to avoid splitting your audience too thinly.

Sequential testing builds winning subject lines through iterative improvement. Start with a baseline, test one variable, implement the winner, then test a second variable against your new baseline. This methodology compounds improvements over time, with each test building on previous learnings to create subject lines that dramatically outperform your original approach.

Segment-specific testing recognizes that different audience segments respond to different messaging approaches. C-suite executives might prefer concise, results-focused subjects while mid-level managers engage more with tactical, how-to oriented lines. Create separate testing tracks for each major segment in your database, building customized subject line libraries that speak directly to each group’s priorities.

Time-based testing isolates the impact of send timing from subject line performance. Test identical subject lines at different times of day and days of week to understand when your audience is most receptive. This creates a comprehensive optimization strategy where you match the right message with the right timing for maximum open rates.

Measuring What Actually Matters

Open rate represents the primary success metric for subject line testing, but sophisticated marketers track multiple layers of engagement. Monitor not just whether people open, but whether they click, reply, or take desired actions. A subject line that generates 50% opens but zero replies might be worse than one driving 30% opens and 5% reply rates.

Time to open provides insight into subject line urgency and priority. Track whether winning subjects generate opens within the first hour, first day, or over multiple days. Subjects that drive immediate opens signal strong relevance and urgency, while delayed opens might indicate the message is interesting but not pressing.

Device-specific open patterns reveal optimization opportunities for mobile versus desktop audiences. If 70% of your opens happen on mobile devices, test subject lines that work within mobile preview limits and avoid special characters that render poorly on phones. Segment your analysis by device type to understand how responsive design affects subject line performance.

Common Testing Mistakes That Sabotage Results

Testing too many variables simultaneously destroys your ability to understand what actually drove results. When you change subject line length, personalization level, and emotional tone all at once, you can’t attribute performance to any specific element. Winning tests tell you nothing actionable, and losing tests don’t reveal what to fix.

Insufficient sample sizes lead to false conclusions that damage your cold email program. Testing subject lines across 30 or 40 sends might show one variant winning by 10 percentage points, but that difference could easily be random variation. Wait until you have at least 100 sends per variant before drawing conclusions, and use statistical significance calculators to validate results.

Confirmation bias causes marketers to see patterns that don’t exist and ignore data that contradicts their assumptions. You might believe questions always outperform statements, so you interpret a 2% difference as validation while dismissing a contradictory result as an anomaly. Document your predictions before running tests, then honestly assess whether results support or refute your hypothesis.

Testing duration mismatches create skewed results that don’t reflect true performance. Running one test for three days and another for ten days introduces timing variables that contaminate your data. Standardize testing windows—typically five to seven business days—to ensure fair comparisons across all experiments.

“The best subject line testers embrace failure as data. Every losing variant teaches you something about your audience’s preferences, refining your understanding of what drives engagement. Most marketers quit testing after a few unsuccessful experiments, but the real insights come from running 20, 30, or 50 tests that systematically map your audience’s response patterns.”

List Fatigue and Testing Frequency

Overtesting burns through your prospect list without generating proportional insights. Each test consumes part of your database, and contacting the same prospects too frequently reduces response rates across all messages. Balance aggressive testing with list preservation by limiting each prospect to one test email per month and maintaining a control group that receives proven subject lines.

Rotation strategies prevent prospect exhaustion while maintaining testing velocity. Divide your total prospect list into segments, cycling which segment receives test messages each week. This approach lets you run weekly tests without hammering the same prospects repeatedly, extending the productive life of your database.

Fresh list injection keeps testing results relevant by ensuring you’re measuring current market preferences. As you exhaust older leads through testing, add new prospects who haven’t seen any of your subject lines. This mix of tested and fresh prospects provides both continuity for comparison and new audiences for validation.

Implementing Your Testing Results Into Systematic Improvement

Documentation transforms individual test results into institutional knowledge that compounds over time. Create a testing database that records every subject line tested, the variant it was tested against, send volume, open rates, and any relevant context about timing or audience. This archive becomes increasingly valuable as patterns emerge across dozens of experiments.

Subject line libraries give your sales team proven templates they can customize for different situations. Organize your winners by category, audience segment, and use case so team members can quickly find high-performing approaches. Include usage notes that explain why each subject line works and what contexts suit it best.

Continuous optimization cycles prevent complacency and adapt to changing market conditions. Markets evolve, inbox algorithms shift, and audience preferences change over time. Subject lines that crushed it last quarter might underperform today, so implement quarterly refresh testing where you challenge even your best performers with new variants.

Team training ensures that testing insights actually influence daily outreach activities. Schedule monthly knowledge-sharing sessions where you review recent test results, discuss implications for messaging strategy, and update team members on current best practices. Testing only creates value when learnings get implemented across your entire cold email operation.

Building Predictive Models from Test Data

Pattern recognition across multiple tests reveals principles that guide subject line creation. After running 30-40 tests, analyze your data for consistent patterns—do certain word types always perform well, do questions outperform statements for specific segments, do numbers boost engagement? These patterns become rules that inform future subject line development.

Predictive scoring helps prioritize which subject lines to test next. Develop a simple scoring system based on your accumulated learnings—subjects that incorporate multiple winning elements get higher priority for testing. This strategic approach focuses testing effort on variants most likely to outperform your current baseline.

Cross-campaign insights emerge when you analyze subject line performance across different products, segments, or campaigns. A pattern that holds true across multiple contexts probably reflects a fundamental truth about your audience. Insights that appear in only one campaign might be situational rather than universal, requiring additional validation before broad implementation.

cold email subject line testing separates professional outreach operations from amateur hour. The discipline of systematic testing, the patience to run proper experiments, and the commitment to implementing learnings transform cold email from spray-and-pray into a refined system that consistently opens doors with ideal prospects. Start with simple A/B tests, build momentum through weekly experimentation, and watch as your open rates climb while your messaging becomes increasingly aligned with what your market actually responds to. Every test makes you smarter about your audience, and every insight compounds into better results across your entire cold outreach program.